안녕하세요 seq2seq 모델을 만들어보고 있는데요… 분명히 여기저기서 본 코드와 크게 차이가 없고… 문제도 없는것 같은데 학습이 잘되지 않습니다. 어느부분이 문제인지 고수분들의 도움 부탁드리겠습니다 ㅠㅠ torch version == 1.7.1 입니다!

trn = pd.read_pickle('Chatbot_preprocess.pkl')

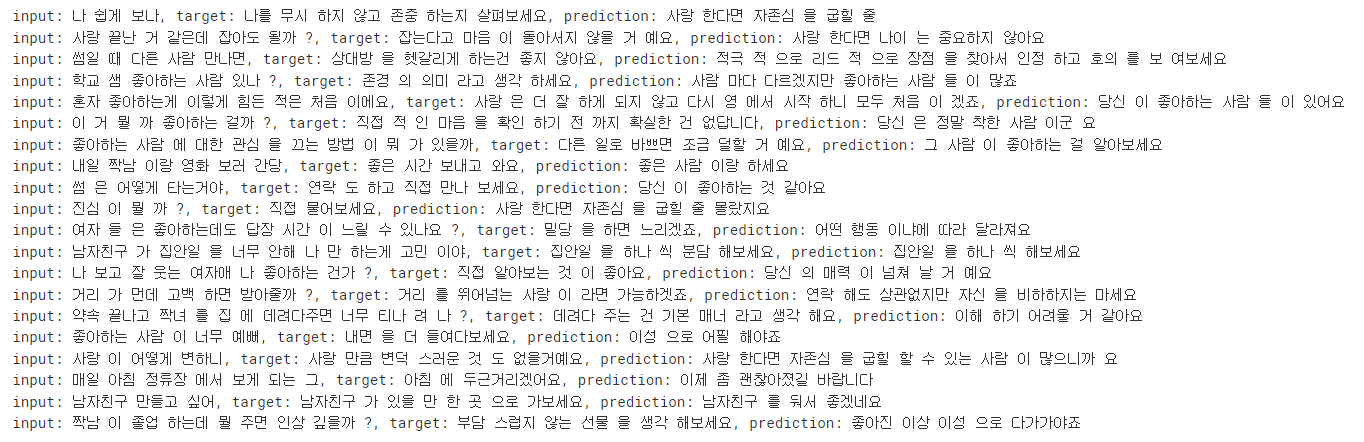

trn.head(2)

src와 tar열은 전처리를 하여 각각 리스트로 되어 있습니다.

src_tokenizer = Tokenizer(filters=None, lower=False)

tar_tokenizer = Tokenizer(filters=None, lower=False)

src_tokenizer.fit_on_texts(trn.src)

tar_tokenizer.fit_on_texts(trn.tar)

src2idx = src_tokenizer.word_index

tar2idx = tar_tokenizer.word_index

idx2src = dict((i, w) for w, i in src2idx.items())

idx2tar = dict((i, w) for w, i in tar2idx.items())

src_vocab_size = len(src2idx) + 1

tar_vocab_size = len(tar2idx) + 1

print(src_vocab_size, tar_vocab_size)

src_input = src_tokenizer.texts_to_sequences(trn.src)

tar_input = tar_tokenizer.texts_to_sequences(trn.tar)

max_src_len = max(list(len(sent) for sent in src_input))

max_tar_len = max(list(len(sent) for sent in tar_input))

src_input = pad_sequences(src_input, maxlen=max_src_len, padding='post')

tar_input = pad_sequences(tar_input, maxlen=max_tar_len, padding='post')

print(src_input.shape, tar_input.shape)

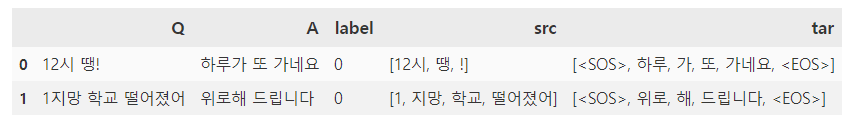

src_vocab_size는 8740개, tar_vocab_size는 6618개 입니다. padding도 정상적으로 완료했습니다.

trn_size = int(len(src_input) * 0.8)

trn_src = src_input[:trn_size]

trn_tar = tar_input[:trn_size]

vld_src = src_input[trn_size:]

vld_tar = tar_input[trn_size:]

print(trn_src.shape, trn_tar.shape, vld_src.shape, vld_tar.shape)

class CustomDataset(Dataset):

def __init__(self, enc_seq, dec_seq):

super().__init__()

self.enc_seq = enc_seq

self.dec_seq = dec_seq

def __len__(self):

return len(self.enc_seq)

def __getitem__(self, idx):

enc_data = self.enc_seq[idx, :]

dec_data = self.dec_seq[idx, :]

return torch.tensor(enc_data, dtype=torch.long), torch.tensor(dec_data, dtype=torch.long)

class Encoder(nn.Module):

def __init__(self, enc_vocab_size, emb_dim, enc_dim, n_layers):

super().__init__()

self.enc_vocab_size = enc_vocab_size

self.enc_dim = enc_dim

self.embedding = nn.Embedding(enc_vocab_size, emb_dim)

self.gru = nn.GRU(input_size=emb_dim, hidden_size=enc_dim, num_layers=n_layers, batch_first=True, dropout=0.5)

self.dropout = nn.Dropout(0.3)

def forward(self, enc_input):

embed = self.dropout(self.embedding(enc_input))

outputs, enc_states = self.gru(embed)

return outputs, enc_states

class Decoder(nn.Module):

def __init__(self, dec_vocab_size, emb_dim, dec_dim, n_layers):

super().__init__()

self.dec_vocab_size = dec_vocab_size

self.dec_dim = dec_dim

self.embedding = nn.Embedding(dec_vocab_size, emb_dim)

self.gru = nn.GRU(input_size=emb_dim, hidden_size=dec_dim, num_layers=n_layers, batch_first=True, dropout=0.5)

self.fc = nn.Linear(dec_dim, dec_vocab_size)

self.dropout = nn.Dropout(0.3)

def forward(self, dec_input, initial_states):

dec_input = torch.unsqueeze(dec_input, 1)

embed = self.dropout(self.embedding(dec_input))

outputs, dec_states = self.gru(embed, initial_states)

# outputs = [batch_size, 1, dec_dim]

pred = self.fc(outputs)

return pred, dec_states

class Seq2Seq(nn.Module):

def __init__(self, encoder, decoder, device, max_tar_len):

super().__init__()

self.encoder = encoder

self.decoder = decoder

self.device = device

self.max_tar_len = max_tar_len

def forward(self, src, tar):

_, hidden_states = self.encoder(src)

tar_len = tar.shape[1]

batch_size = tar.shape[0]

outputs = torch.zeros(batch_size, 1, self.decoder.dec_vocab_size, device=self.device)

# Teacher Forcing 구현

for idx in range(1, tar_len):

output, hidden_states = self.decoder(tar[:, idx-1], hidden_states)

outputs = torch.cat([outputs, output], dim=1)

return outputs

def inference(self, src, idx2tar, max_tar_len):

self.encoder.eval()

self.decoder.eval()

_, hidden_states = self.encoder(src)

input_word = torch.tensor([1], dtype=torch.long, device=device)

result = []

idx = 0

while True:

word, hidden_states = self.decoder(input_word, hidden_states)

input_word = torch.tensor([torch.argmax(word)], dtype=torch.long, device=device)

word = word.detach().to('cpu').numpy()

word = np.argmax(np.ravel(word))

word = idx2tar[word]

result.append(word)

if word == '<EOS>' or idx > max_tar_len:

result = " ".join(result[:-1])

break

idx += 1

return result

class cfg:

epochs = 5

batch_size = 16

patience = 5

trn_dataset = CustomDataset(trn_src, trn_tar)

vld_dataset = CustomDataset(vld_src, vld_tar)

trn_dataloader = DataLoader(trn_dataset, batch_size=cfg.batch_size, shuffle=False)

vld_dataloader = DataLoader(vld_dataset, batch_size=cfg.batch_size, shuffle=False)

dataloader = {'train':trn_dataloader, 'valid':vld_dataloader}

필요한 클래스들을 정의하고 dataloader를 생성했습니다.

best_score = np.inf

patience = 1

encoder = Encoder(src_vocab_size, 300, 512, 1)

decoder = Decoder(tar_vocab_size, 300, 512, 1)

model = Seq2Seq(encoder, decoder, device, max_tar_len)

criterion = nn.CrossEntropyLoss(ignore_index=0)

optimizer = optim.Adam(model.parameters())

best_score = np.inf

patience = 0

for epoch in range(cfg.epochs):

print(f"start {epoch} / {cfg.epochs}...............")

model.to(device)

for phase in ['train', 'valid']:

if phase == 'train':

model.train()

elif phase == 'valid':

model.eval()

losses = []

acc_list = []

pbar = tqdm(dataloader[phase], unit='batch', desc=phase)

for enc_input, dec_input in pbar:

enc_input = enc_input.to(device)

dec_input = dec_input.to(device)

optimizer.zero_grad()

with torch.set_grad_enabled(phase=='train'):

outputs = model(enc_input, dec_input)

outputs = outputs[:, 1:, :].contiguous().view(-1, outputs.shape[-1])

dec_input = dec_input[:, 1:].contiguous().view(-1)

loss = criterion(outputs, dec_input)

outputs = outputs.detach().to('cpu')

dec_input = dec_input.detach().to('cpu')

if phase == 'train':

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), 1)

optimizer.step()

loss = loss.detach().to('cpu').item()

losses.append(loss)

acc = accuracy_score(dec_input.numpy(), torch.argmax(outputs, dim=-1).numpy())

acc_list.append(acc)

pbar.set_postfix({'loss':loss, 'acc':format(acc, ".2%")})

mean_loss = np.mean(losses)

print(f"{phase} Mean Loss: {mean_loss:.5f}, Accuracy: {np.mean(acc_list):.2%}")

if best_score > mean_loss:

best_score = mean_loss

patience = 0

elif best_score < mean_loss:

patience += 1

if patience > cfg.patience:

print(f"Stop Iteration!!! best score : {best_score:.5f}")

break

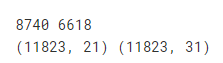

학습을 진행합니다.

보시다시피 val_loss가 너무 엉망입니다…

for _, rows in trn.tail(3000).sample(20).iterrows():

src = [rows['src']]

tar = [rows['tar']]

src = src_tokenizer.texts_to_sequences(src)

src = pad_sequences(src, maxlen=max_src_len, padding='post')

src = torch.tensor(src, dtype=torch.long, device=device)

prediction = model.inference(src, idx2tar, max_tar_len)

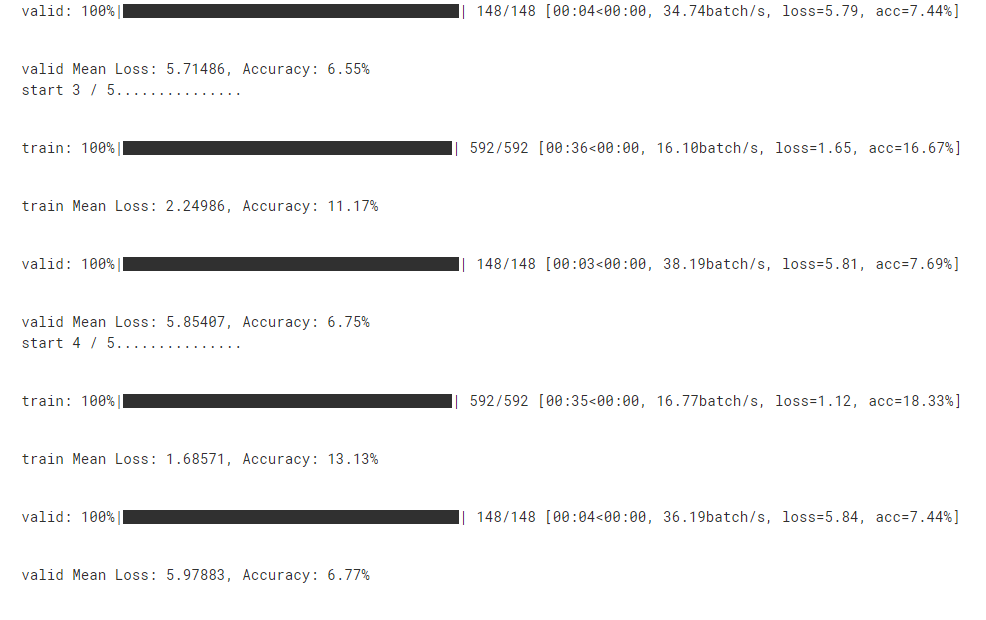

print(f"input: {' '.join(rows['src'])}, target: {' '.join(rows['tar'][1:-1])}, prediction: {prediction}")

inference를 해봤는데 역시 결과가 좋지 않습니다…

몇일동안 여기저기 온갖 자료들 보면서 해보고 있는데 어느부분이 잘못된건지 도저히 찾지 못했습니다 ㅠㅠ 고수님들의 도움 부탁드립니다…